Topic: From EA Generation to DARWINEX Zero — Connecting the Dots

Over the last months I’ve shared several parts of the work — sometimes about EA Studio generation & settings, sometimes about demo incubation, sometimes about the FxBlue workflow, sometimes about Masters creation.

This post is simply to connect everything into one coherent process and show where we are today.

1) The starting point

The starting point was simple.

Like everyone, starting in May/June 2024, I started generating EAs like hell.

But very quickly, the first real question came up:

How do you consistently produce, validate, and deploy multiple strategies at scale — without losing control?

2) Generation — creating the raw material

Everything starts with generation in EA Studio Reactor.

• building strategies

• defining rules and settings

• producing a large and diverse pool of EAs

At this stage, the objective is not perfection.

It is: breadth and diversity

3) Incubation — the first real filter

The first obvious step was the installation of multiple demo accounts, which quickly turned into what we now call the incubation phase.

• running many EAs

• letting them accumulate trades

• observing real behavior over time

The goal here is simple: exposure to real data and initial validation

No shortcuts.

No assumptions.

Just letting strategies run.

Over time, this scaled.

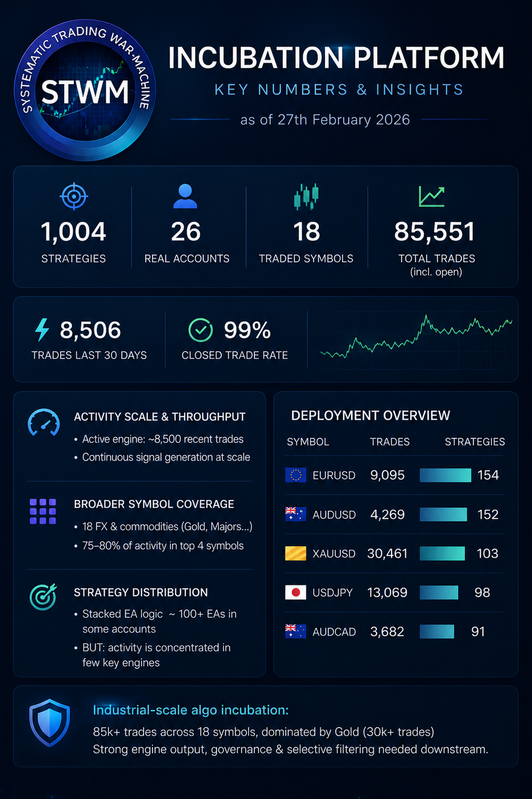

Today the environment is:

• ~30 MT4/MT5 instances

• ~1,000 EAs running

• ~86,000 cumulative trades

• ~9,000 trades per month

Every time I explain this setup, the reaction is usually the same: “You’re crazy.” Maybe.

But without this, I could not have learned what I know today.

At that scale, something important happens: you stop thinking in terms of individual strategies and start thinking in terms of systems

This is what the incubation layer looks like today:

4) The problem that emerged

As the number of strategies increased, a clear problem appeared:

• too many EAs

• too much data

• discretionary top selections were not really working live

• no consistent way to evaluate them

This is where the need became obvious: control and structure

5) Building the workflow — FxBlue + AI

To bring structure into the process, we needed two elements:

• a reliable data source

• a consistent way to process it

Then FxBlue became the connector.

It provides:

• unified tracking across accounts

• consistent trade data

• a stable base for analysis

On top of that, we built the workflow using AI. Not to predict markets, but to:

• process large volumes of data

• apply deterministic rules

• standardize classification

• generate repeatable outputs

This allowed us to move from:

• manual observation

to:

a structured, reproducible workflow

6) FxBlue Workflow — bringing order

With FxBlue as data source and the workflow on top (upstream), strategies are no longer just running.

They are: continuously evaluated and classified based on objective rules

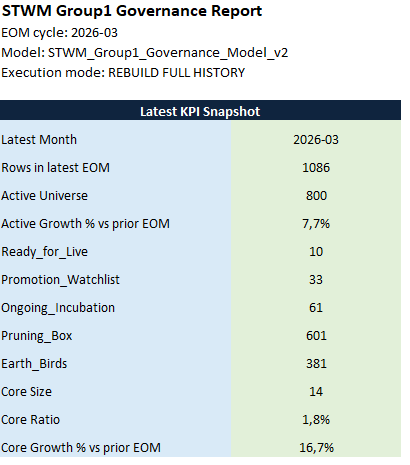

Over time, each strategy moves through defined states:

• Ongoing Incubation

• Promotion Watchlist

• Ready for Live

• Pruning Box

• Earth Birds

This transforms incubation from:

• a collection of EAs

into:

a controlled pipeline

FxBlue governance snapshot — distribution of strategies across the pipeline

From ~1,000 running strategies, only ~40 reach the Top Band and are considered for Masters.

7) Masters — structuring what survived

When strategies reach the Top Band, they are not used directly. They are combined into Masters within Quant Analyzer.

Masters are: structured portfolios of validated EA Studio strategies

Built to balance:

• size

• symbols

• assets

• equity behavior

The objective is not to find the best combination.

It is:

to verify that validated strategies remain stable once combined into a portfolio structure

This is where individual strategies become a portfolio — return and risk combined

8) Master Governance — keeping the structure clean

Once Masters (Darwinex demo accounts) are running, a second layer (downstream) of control is applied.

This layer focuses on:

• removing clear failures

• monitoring degradation

• tracking inactivity

• maintaining structure over time

The goal is not to re-evaluate everything again.

It is:

to keep the signal pool clean and controlled

9) Moving from demo to real

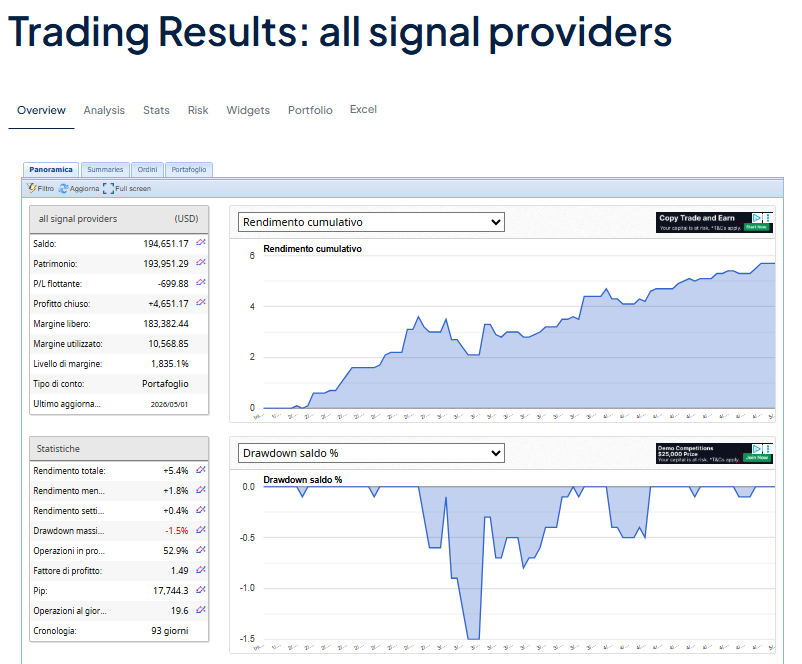

Moving EAs directly from demo to real accounts does not work reliably. The fallback risk to poor performance is simply too high. So we moved to a different approach: signal copy trading.

We do not reinstall EAs on real accounts:

• we open real accounts

• we select the best Masters of the month

• we copy trades from Masters into real accounts

The goal is not to overcomplicate the structure.

It is:

to preserve performance by keeping execution in the same environment where it was validated

10) Where we are today

After:

• generation

• incubation

• building the workflow

• classifying strategies

• structuring Masters

• validating signals

we now have: a structured and continuously filtered set of strategies

11) Next step — deployment

Tomorrow we move to the next phase → Launch of 2 new DARWINEX Zero portfolios

These will:

• select validated Masters and copy their trades

• understand how Darwinex risk calibration behaves on our structure

• apply portfolio construction rules

• target seed allocation (~2 months) and investor capital (~6 months)

Final thought

This is not about a single EA. It is about building a process that:

• generates strategies

• accumulates data

• creates structure

• filters signals

• and only then deploys them

We might still be wrong. But one thing became clear:

the focus should not be on the single strategy, but on the system that manages and scales them

Of course, we’ll also share what happens with the new DARWINs.

P.S.: Last but not least, all of this was possible thanks to a team of five traders working together toward a shared goal. Thanks to this forum, two more members will join us next week. I can’t wait to have them on board.

Vincenzo